The AI Automation Gap in 2026 Performance Marketing

Scale paid marketing faster with AI

You're in—expect an email shortly.

By Andrew Silard

Performance marketing is splitting in two. One path is "faster typewriter" AI: more copy, more variations, more assets. Real time savings, but capped upside. The other path is AI-as-operator: agents running repeatable workflows against real data, with humans steering direction and handling judgment calls.

After talking with Tim Dalrymple (founder of Roadway) and other growth leaders deep in AI transformation, a simple three-stage model becomes clear:

Stage 1: AI as copilot. AI generates ad copy or ad variations, helps with keyword research, does competitive analysis and suggests new creative angles. It is occasionally brilliant, but often wrong. You still have to use judgment and execute everything.

Stage 2: AI as operator. AI generates creatives, builds campaigns, launches new ads and optimizes your spend. You approve, reject, and intervene on edge cases.

Stage 3: Full self-driving. Set a goal, define an audience, and campaigns get built, monitored, and optimized autonomously. Zuckerberg talks about this as a north star for Meta.

Most teams are barely at Stage 1. A few are crossing into Stage 2. Nobody's at Stage 3 (and anyone who claims otherwise is selling something). The interesting question is why the jump from 1 to 2 is so hard, and what the teams that have made it did differently.

Stage 1: Where 93% of teams are stuck

The State of PPC 2026 survey (1,300 practitioners) found that only 7% are consistently using agentic automation. That's the real Stage 2 adoption number. The other 93% are still at Stage 1, or have not figured out how to integrate AI yet.

The gradient within Stage 1 tells the story: 59% use ChatGPT for ad copy. 21% have tried AI agents for some kind of automation. But trying an agent once and building a system that runs your workflows are very different things. The proportion of marketers who can demonstrate ROI from AI actually fell from 49% to 41% year over year.

The same survey found that AI saves the average PPC practitioner 5.2 hours per week. That's helpfu, but it's mostly productivity on existing tasks, not a change in how work gets done.

That's Stage 1: lots of tool adoption, very little workflow transformation. And you can't prompt your way from here to Stage 2.

Why the jump to Stage 2 is a systems problem

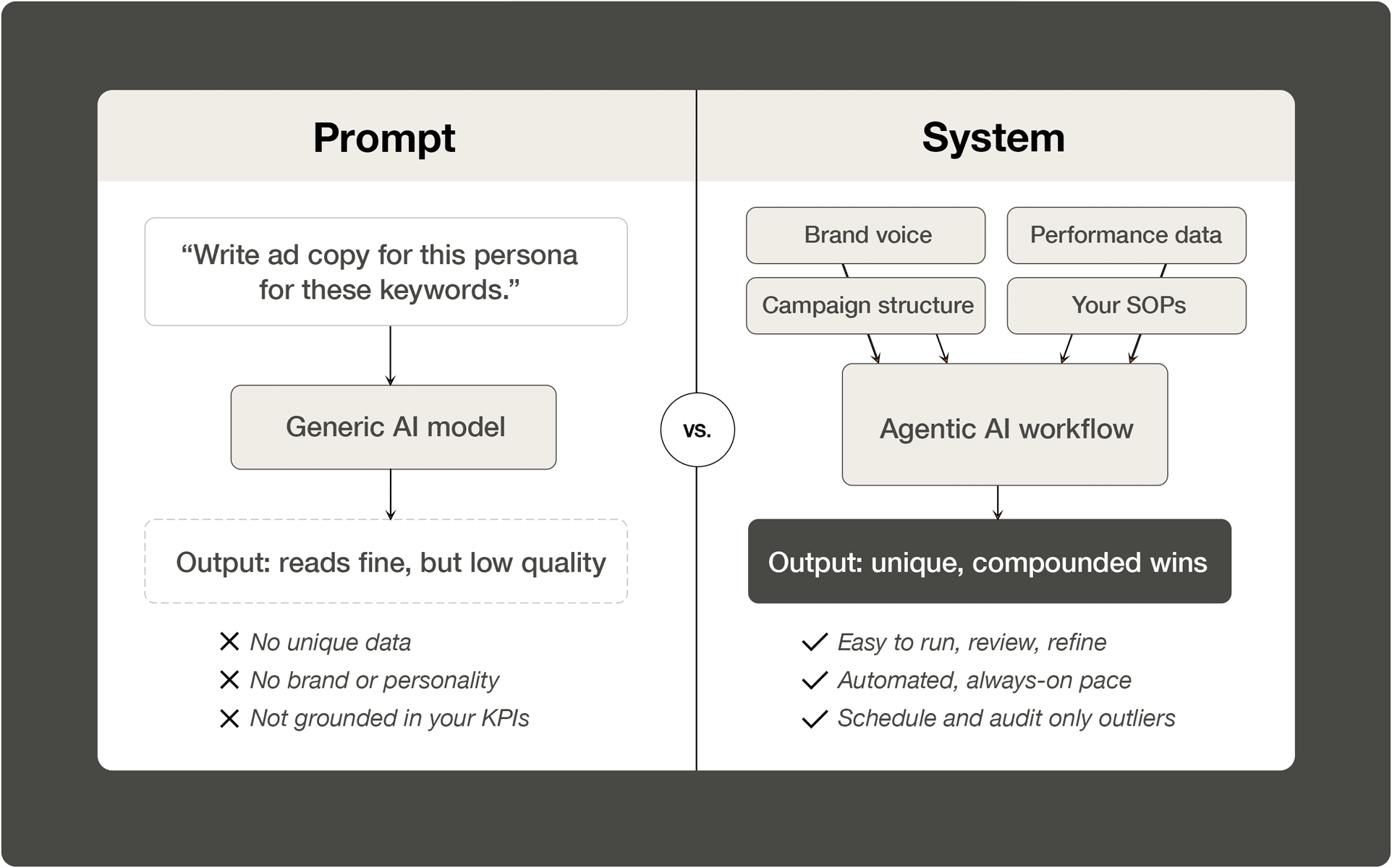

My LinkedIn feed is full of "AI marketing agent" repos and downloadable skill packs. Most are just fancy prompts: you feed them a topic and get back something that reads fine but hits a low quality bar with no unique data, no differentiated perspective, no grounding in your actual KPIs.

Teams crossing into Stage 2 didn't get there by finding better prompts. They built systems.

A prompt is: "write me ad copy for this persona for these keywords." A system is: your documented brand voice, your performance data, your campaign structure, your optimization process—wired into agent workflows that can fact-check and optimize against real context.

Austin Lau runs performance marketing at Anthropic. He built a custom agent system that encodes his process for mining Google Ads search terms: filter by unevaluated terms, sort by spend, cross-reference terms against performance metrics alongside the keyword, campaign, and ad group that triggered it. The agent runs his SOP, outputs in his format, and surfaces negatives with reasoning he can audit before anything gets implemented. A generic downloaded skill can't do that.

Is it faster? Not at the beginning. Prototyping is real work, and the run, review, refine loop takes time. But once it works, you schedule it, review exceptions, and move on. Over time, the wins compound, while campaigns get an always-on rigor humans can't match.

I wish Stage 2 were as easy as "buy this tool, level up." It's trending that direction, and Roadway is one of the furthest along.

Where Stage 2 breaks (and why Stage 3 is still fiction)

Stage 2 works for templated, repeatable, data-in, recommendation-out tasks like creative variations, bid adjustments, budget reallocation, reporting, UGC production at volume. A study of 306 practitioners running AI agents in production found that 68% execute at most 10 steps before needing intervention. Reliability is still the top unsolved challenge.

It breaks on strategic judgment, creative concept development, cultural context, and cross-platform measurement. Every company has attribution gaps and data-system seams. Even a killer agent workflow can't fully paper over that.

The gap between Stage 2 and Stage 3 isn't incremental. It requires agents that can reason about strategy, understand brand nuance, and make judgment calls under ambiguity. Maybe models get there, but models alone aren't enough.

How to make the leap

The barrier to Stage 2 isn't budget or smarter prompts. It's the systems-building work most teams skip.

This is where opinionated platforms like Roadway can compress the timeline. Tim built Roadway after leading growth marketing at Webflow and Notion to solve the infrastructure gap. Instead of asking every marketer to become a systems engineer, Roadway connects to ad platforms, encodes performance workflows, and surfaces the attribution intelligence agents need to make recommendations you can trust.

But even with better tooling, the person operating the system matters. I've been calling them "block-shaped operators." They combine deep performance marketing craft with enough technical fluency to build, customize, and maintain agent workflows that encode their process.

I've seen this pattern before. When Notion's growth team scaled, the highest-impact people weren't the channel specialists; they were the ones who could connect strategy to execution to measurement in a single loop.

If you’re at Stage 1, you’re not alone. You’re with most other teams. The opportunity is at 2. The barrier isn't adopting AI—it's building the systems underneath it that encode your data, context, and judgment.